On November 6, 2025, the Partnership for Information and Democracy held the fourth and last meeting of its workstream on “Strengthening Information Integrity on Private Messaging Platforms”, led by Ukraine and Luxembourg. The discussion explored the latest evolutions of these platforms: the integration of artificial intelligence and advertising and its impact on information integrity.

Based on one year of in-depth discussions, research and a questionnaire among States of the Partnership for Information and Democracy, the meeting was the occasion to present the draft structure and highlights of the forthcoming policy report to gather feedback. Private messaging platforms pose distinct challenges in terms of fighting disinformation given their semi-private nature. They also occupy a regulatory gray zone, in most cases being excluded from online platform regulation. Therefore, media literacy is the most consistent policy response. Regulators have also not yet found a balance between encryption and accountability.

The monetisation of disinformation

As private messaging platforms are evolving, incorporating more and more features of social media platforms, providers have also started to introduce advertising, which is the case of WhatsApp, Viber and Telegram. However, when platforms that aim to make profit introduce advertising in specific features, they will modify their designs and systems to encourage user engagement on these features, leading to addictive design choices that compromise content quality. The lack of transparency of the ad chain makes it difficult, even for well intended advertisers, not to finance disinformation.

Artificial intelligence threatening encryption

Originally conceived for interpersonal communication, some of these platforms now enable to ask questions to an integrated artificial intelligence. AI capabilities range from a chatbot, to summaries and image generation, depending on the platform and country it is deployed in. Yet, AI mainly functions through shared models that the platform has to interact with, opening cybersecurity and encryption risks. Platforms need to be transparent about these new functions, their implications for encryption and empower users to decide if they want to interact with them.

Next steps

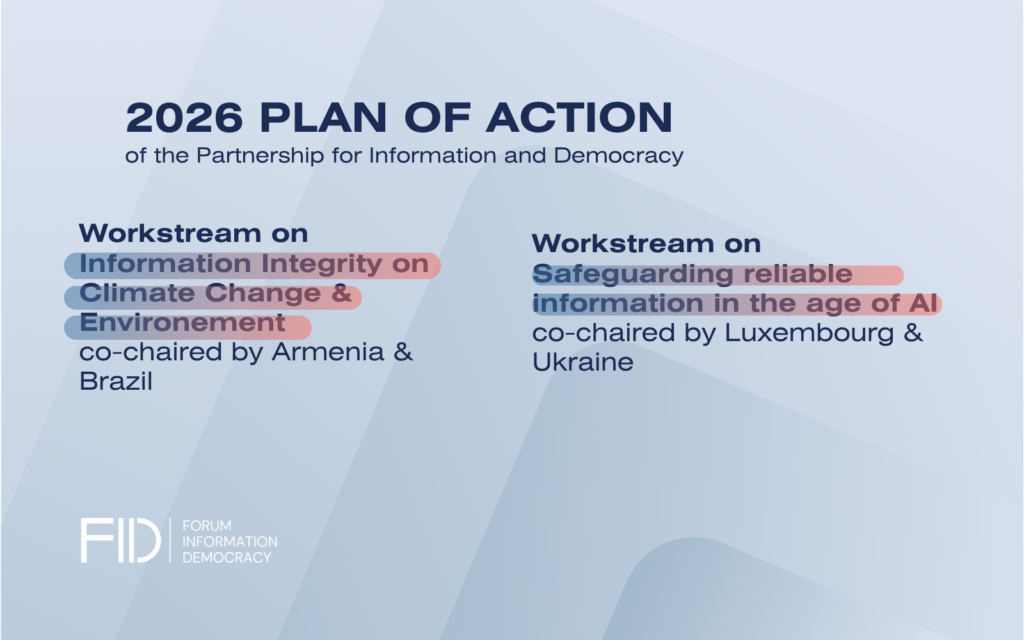

The insights of the workstream will be summarised in a final policy report with concrete policy and regulatory recommendations addressed to States and platforms.

Stay tuned for a publication in early 2026!